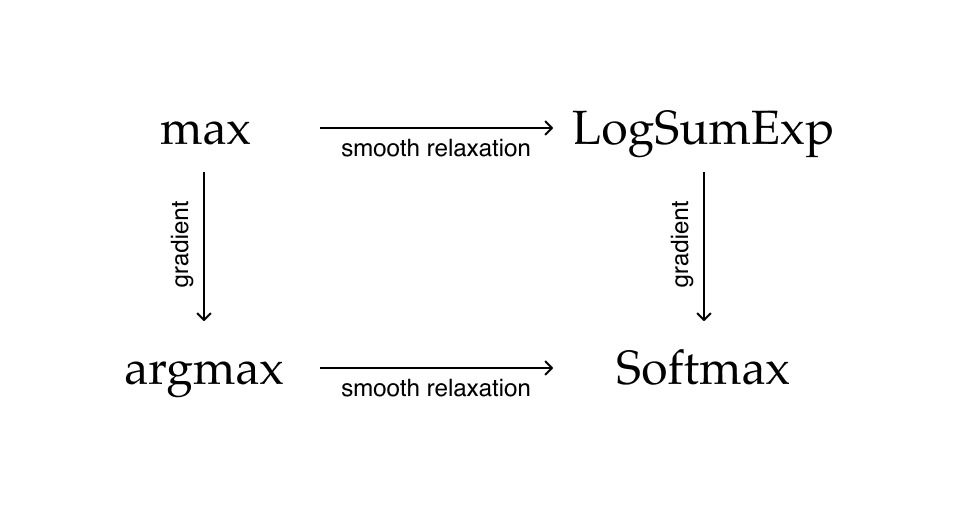

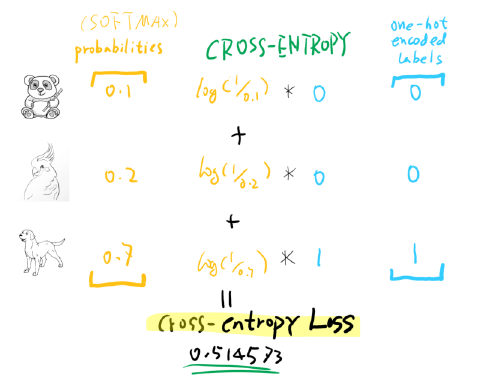

The softmax function is an activation function used in neural networks to convert the input values into a probability distribution over multiple classes. In machine learning, cross-entropy loss is used to measure the difference between the true distribution and the predicted distribution of the target variable. Essentially, you are trying to measure how uncertain the model is that the predicted label is the true label. Entropy is calculated using the negative logarithm of the probabilities assigned to each possible event. The core concept behind cross-entropy loss is entropy, which is a measure of the amount of uncertainty in a random variable. It is a scalar value that represents the degree of difference between the two distributions and is used as a cost function in machine learning models. The cross-entropy loss is a measure of the difference between two probability distributions, specifically the true distribution and the predicted distribution. how costly it is to update them to be less wrong.

Sometimes the loss function is referred to as a cost function in deep learning as it indicates how wrong the current model parameters are, i.e. The loss function is used to optimize the model’s parameters, so that the predicted values are as close as possible to the actual values. In machine learning, a loss function is a measure of the difference between the actual values and the predicted values of a model. Visualization of the gradient descent trajectory for a nonconvex function.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed