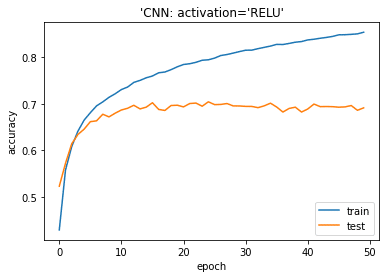

Buy a coffee from your favorite cafe and share it with a friend. App provides a simple and flexible way to spread the kind gesture of sharing & receiving Coffee, where the user walks on a limber in-app route and follows this beautiful act of sharing and caring.īuy Coffee, Share Good Karma: With Coffee Karma, connect with all your friends and spread good karma. Various mind-blowing features made Coffee Karma an excellent mobile application. Clustered features make Coffee Karma more Sharing & Caring. App gives you a platform to establish your identity on the application, and whenever you visit any Coffee/beverages Shop, just buy a cup of coffee, record a video and share it with any of your friends, family & near ones in your contact list and share a cup of coffee with them via sharing the video as a Good Karma. This app supplements the mind-blowing features that help people to share their coffee karma with others and helps you to build this benevolent process of sharing coffee wider. This mobile application is conceptualized & beautifully crafted to follow the Caffe Sospeso gesture and spread kindness with the awesomeness of Digitization. Share & Receive Good Karma through Coffee KarmaĬoffee Karma is a Fun & Interactive mobile Application for sharing & receiving Coffee Karma from your friends, loved ones & near ones. This thoughtfulness and act of sharing have ideated as a Mobile Application, and Coffee Karma Mobile App has come into the picture. Later a person can avail themselves the free coffee as a Good Karma of someone who bought his a coffee. This tradition made people generous about each other where people use to pay for two coffees but receive & consume only one. Under this act- a cup of Coffee gets paid for others in advance as an anonymous act of solidarity & generosity. This divine thought got an actualization in a few countries, and people have activated this as ‘Caffe Sospeso,’ which means pending coffee or suspended coffee. So, to receive much love & care, always be generous & caring to everyone and pursue the act of kindness towards every soul. These are the dance moves of the most common activation functions in deep learning.Life is a boomerang what you give, comes to you. My friend and colleague Giray inspires me to produce this post. Now, you can design your own activation function or consume any newly introduced activation function just similar to the following picture. Picking the most convenient activation function is the state-of-the-art for scientists just like structure (number of hidden layers, number of nodes in the hidden layers) and learning parameters (learning rate, epoch or learning rate). So, we’ve mentioned how to include a new activation function for learning process in Keras / TensorFlow pair. If you design swish function without keras.backend then fitting would fail. This comes from importing keras backend module. The framework knows how to apply differentiation for backpropagation. We just define the activation function but we do offer its derivative. Remember that we will use this activation function in feed forward step whereas we need to use its derivative in the backpropagation. Model.add(Dense(num_classes, activation='softmax')) Model.add(Dense(512, activation = swish)) Model.add(Conv2D(64,(3, 3), activation = swish)) # apply 64 filters sized of (3x3) on 2nd convolution layer Model.add(Conv2D(32, (3, 3) #32 is number of filters and (3, 3) is the size of the filter. Besides, I include this in a convolutional neural networks model. In this case, I’ll consume swish which is x times sigmoid. So, this post will guide you to consume a custom activation function out of the Keras and Tensorflow such as Swish or E-Swish.Īll you need is to create your custom activation function. This might appear in the following patch but you may need to use an another activation function before related patch pushed. For example, you cannot use Swish based activation functions in Keras today. Herein, advanced frameworks cannot catch innovations. Then, it is shown that extended version of Swish named E-Swish overperforms many other activation functions including both ReLU and Swish. In 2017, Google researchers discovered that extended version of sigmoid function named Swish overperforms than ReLU. For example, second AI winter is over when vanishing gradient problem discovered and ReLU activation function introduced. Such an extent that number of research papers published about machine learning is growing faster than Moore’s law. Almost every day a new innovation is announced in ML field.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed